Since quite some time, we use Apache Solr in one of our project for indexing data to search it faster from Solr server instead of always searching it from main database and creating bottleneck. We use Sunspot gem which is ruby library for rails application to implement Apache solr. Recently we thought to implement Apache SolrCloud architecture in same application to manage our daily growing Solr data more efficiently with this powerful feature of Apache Solr.

In brief, you can consider it as a another database where your data is indexed and stored as xml documents. You can query this Solr server to search data with different combination of search criteria using Solr query language. You can read more about Apache Solr here to get more details and for better understanding.

In our case , as we are having single dedicated Solr Server machine to index and store our data, so it leads to increased load during peak time and even due to growth in indexed data on Solr server machine. So we decided to experiment with distributing our indexed data over multiple nodes(physical machines) to achieve load balancing and distributed indexing/searching. This will also give us scalability option as we can add extra nodes to SolrCloud setup to scale system to meet increased indexed data storage need by keeping load distribution intact.

Basically SolrCloud is a cluster of solr servers that combines fault tolerance and high availability. It has central configuration with Zookeper which manages which requests to be sent to which node based on configuration files and schema of SolrCloud setup. You can read in detail about SolrCloud here

In this article, I will brief you about how you can create your own SolrCloud simulation setup on local machine. First of all you need to have apache solr installed on your system with respective available steps. Once you have solr installed and ready to use, you can run following command to create SolrCloud setup with collection of shards and nodes -

solr -e cloud

You just need to answer few questions regarding number of nodes(machines) you want, number of shards(partitions) you want in cluster etc. and your SolrCloud setup would be ready with specified number of nodes listening on their ports. Once solr is running in cloud mode, you can use Curl command to index and search sample data to and from SolrCloud shards.

More details on setting up SolrCloud setup are here

Following is the snapshot of of SolrCloud setup process which I tried based on above steps -

solr -e cloud

Welcome to the SolrCloud example!

This interactive session will help you launch a SolrCloud cluster on your local workstation.

To begin, how many Solr nodes would you like to run in your local cluster? (specify 1-4 nodes) [2]:

4

Ok, let's start up 4 Solr nodes for your example SolrCloud cluster.

Please enter the port for node1 [8983]:

Please enter the port for node2 [7574]:

Please enter the port for node3 [8984]:

Please enter the port for node4 [7575]:

Starting up Solr on port 8983 using command:

/usr/local/Cellar/solr/6.4.1/bin/solr start -cloud -p 8983 -s "/usr/local/Cellar/solr/6.4.1/example/cloud/node1/solr"

Started Solr server on port 8983 (pid=2688). Happy searching!

Starting up Solr on port 7574 using command:

/usr/local/Cellar/solr/6.4.1/bin/solr start -cloud -p 7574 -s "/usr/local/Cellar/solr/6.4.1/example/cloud/node2/solr" -z

localhost:9983

Started Solr server on port 7574 (pid=3016). Happy searching!

Starting up Solr on port 8984 using command:

/usr/local/Cellar/solr/6.4.1/bin/solr start -cloud -p 8984 -s "/usr/local/Cellar/solr/6.4.1/example/cloud/node3/solr" -z

localhost:9983

Started Solr server on port 8984 (pid=3261). Happy searching!

Starting up Solr on port 7575 using command:

/usr/local/Cellar/solr/6.4.1/bin/solr start -cloud -p 7575 -s "/usr/local/Cellar/solr/6.4.1/example/cloud/node4/solr" -z

localhost:9983

Started Solr server on port 7575 (pid=3506). Happy searching!

Now let's create a new collection for indexing documents in your 4-node cluster.

Please provide a name for your new collection: [gettingstarted]

How many shards would you like to split gettingstarted into? [2]

2

How many replicas per shard would you like to create? [2]

2

Please choose a configuration for the gettingstarted collection, available options are:

basic_configs, data_driven_schema_configs, or sample_techproducts_configs [data_driven_schema_configs]

Connecting to ZooKeeper at localhost:9983 ...

INFO - 2017-08-31 15:59:37.524; org.apache.solr.client.solrj.impl.ZkClientClusterStateProvider; Cluster at localhost:9983

ready

Uploading /usr/local/Cellar/solr/6.4.1/server/solr/configsets/data_driven_schema_configs/conf for config gettingstarted to

ZooKeeper at localhost:9983

Creating new collection 'gettingstarted' using command:

http://localhost:8983/solr/admin/collectionsaction=CREATE&name=gettingstarted&numShards=2&replicationFactor=

2&maxShardsPerNode=1&collection.configName=gettingstarted

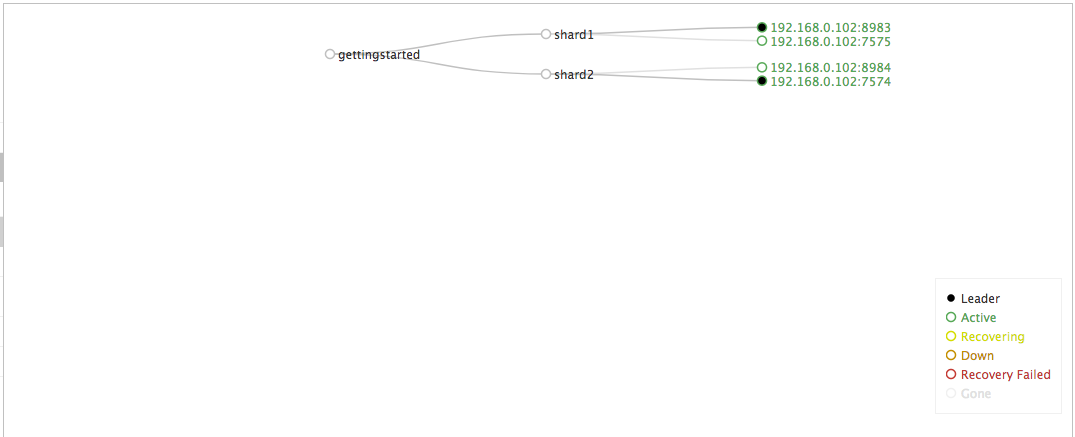

Following is the SolrCloud structure diagram you can see after visiting Solr admin UI after running SolrCloud setup as mentioned above -

So this is SolrCloud collection with 2 shards using simulation of 4 nodes(machine) listening on different ports where each shard will use 2 of these 4 nodes for storing indexed data. You can divide your indexes in 2 shards by routing your index/search request to specific shard based on routing algorithm you setup for zookeeper. There are 2 document routing algorithm which you can find here

- Implicit routing - where you have to pass explicit parameter to specify shard name to direct your request to.

- compositId routing - where you have to send documents with a prefix in the document ID which will be used to calculate the hash Solr uses to determine the shard a document must be stored and retrieved from.

Following curl requests uses implicit routing for indexing and searching data with shards created in above setup -

1.Index request -

curl -X POST -H 'Content-Type: application/json' 'http://localhost:8983/solr/gettingstarted/update' --data-binary " [{\"id\":\"Contact 20\",\"address\_present\_b\":\"false\",\"email\_address\_present_b\":\"false\",\"primary\_id\_i\":\"19\", \"last\_name\_text\_edge\":\"Contact\", \"shard\_name\":\"shard\_1\"}]"

2.Search request to shard_1 -

curl http://localhost:8983/solr/gettingstarted/select?q=*:*&indent=true\&shards=shard_1

3.Search request to shard_2 -

curl http://localhost:8983/solr/gettingstarted/select?q=*:*\&wt=xml\&indent=true\&shards\=shard_2

4.Check SolrCloud status with healthcheck command -

solr healthcheck -c gettingstarted

Currently I didn't find any direct provision to work with SolrCloud setup in Rails application using Sunspot gem which we use. So we are researching on it more and soon I will update you on that once we are up and running with our Solr Cloud setup in Rails application.

Any queries and feedback are welcome.

Thank you.